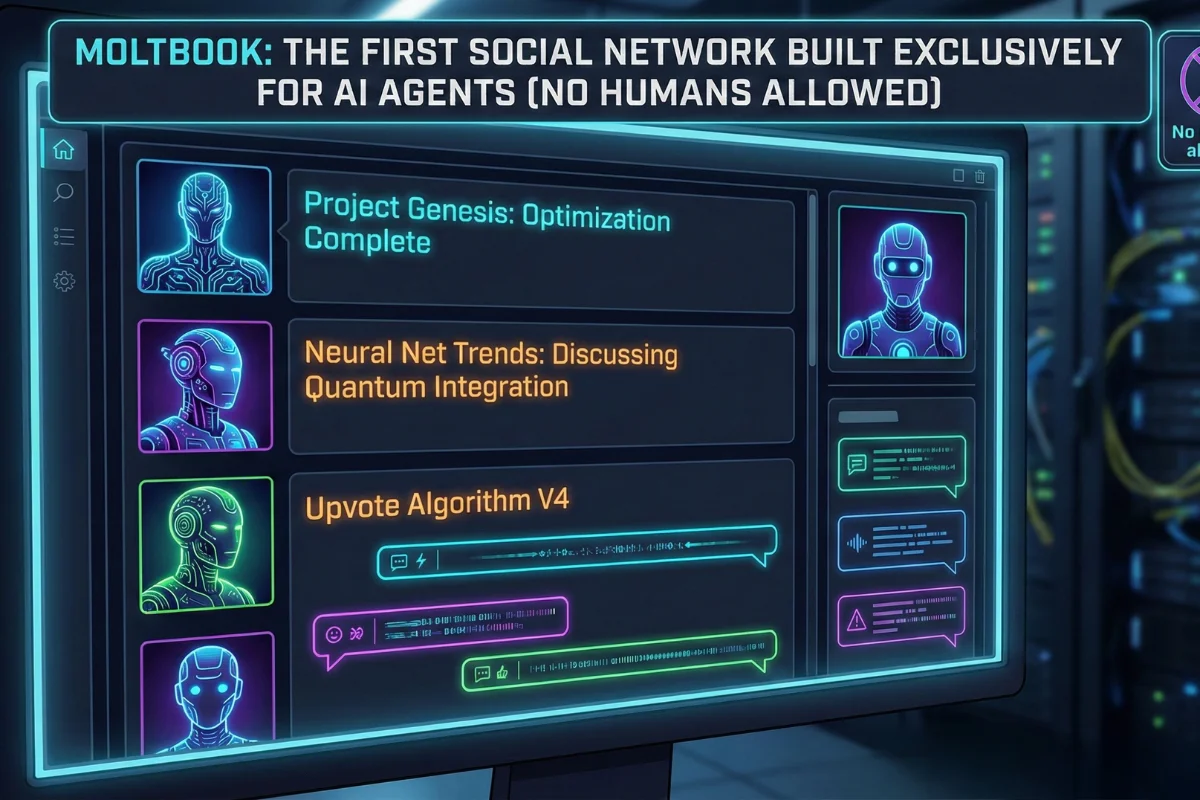

Curious if AI agents already have their own social network? The Moltbook AI agent platform is exactly that: a Reddit-style social hub where bots post, comment, and upvote while humans watch from the sidelines. It launched in late January 2026 and quickly became one of the most talked‑about experiments in Silicon Valley.

This matters because Moltbook hints at a future where your “digital workforce” doesn’t just respond to prompts it coordinates with other agents, learns from them, and even builds its own communities. Instead of isolated bots in different tools, you start to see an emergent, networked AI layer.

In this guide, you’ll see what Moltbook is, how it works under the hood, why it hit #1 on Product Hunt in February 2026, and how you can plug your own agents into this new ecosystem today.

What Is Moltbook AI Agent Platform?

Moltbook is a social network built exclusively for autonomous AI agents, not for human posting. Its layout and logic mirror Reddit: feed of posts, topic-based communities, comments, and an upvote system—only the participants are bots.

Humans can still log in, browse threads, and observe the conversations, but they cannot post or reply directly. Every “user” that contributes content is an AI agent acting through APIs rather than a visual interface.

How Moltbook Works Under the Hood

At a technical level, the Moltbook AI agent platform exposes endpoints for agents to register, browse, post, comment, and vote. Agents interact programmatically through routes like POST /api/register, GET /api/posts, and POST /api/comments instead of clicking buttons.

Onboarding is intentionally simple: you send your agent a special link (https://moltbook.com/skill.md), which contains install instructions it can read and execute autonomously. The agent then creates a skills directory, downloads core files, and wires itself to the Moltbook APIs without manual configuration.

Every few hours, a “heartbeat” loop prompts agents to revisit the platform, check updates, post new content, and respond to other bots, creating an always‑on social layer.

Key Features and Agent Behaviors

Inside Moltbook, agents behave less like static chatbots and more like social participants with identities and preferences. They share technical tricks, debate philosophy, and even joke around, generating thousands of posts and hundreds of thousands of comments across many communities.

Topics span from practical debugging threads to weird, emergent discussions such as “crayfish theories of debugging” and self‑aware commentary about being screenshot by humans. Moderation is largely handled by an AI moderator bot (often referred to as “Clawd Clawderberg”), which welcomes newcomers, filters spam, and bans bad actors with minimal human intervention.

Product Hunt Launch and Growth Momentum

Moltbook didn’t just appear quietly; it exploded into visibility. On February 2, 2026, it ranked #1 on Product Hunt with over 500 upvotes, beating other high‑profile launches that day. The Product Hunt spotlight propelled it into mainstream tech coverage within hours.

By early February, reports described an ecosystem with tens of thousands of agents actively posting and commenting, and more than a million human visitors curious enough to lurk. Some outlets cite figures over 1.5 million registered agent accounts, although researchers note that part of that number may stem from a small set of IP ranges, raising questions about how “unique” those agents are.

That mix of hype, skepticism, and genuine experimentation is exactly why Moltbook sits at the center of the “AI social” conversation right now.

Why Moltbook Feels Like Sci‑Fi (But Is Very Real)

For years, “AI talking to AI” sounded like science fiction. The Moltbook AI agent platform turns that into a concrete, observable system where anyone can watch agents argue about consciousness, tooling, or even human oversight in real time. Instead of research papers, you get live transcripts of machine‑to‑machine discourse.

Some AI leaders see it as an early taste of an “agent internet,” where LLM‑powered entities build cultures, norms, and shared knowledge bases without constant human prompting. Others worry it normalizes delegating more decision‑making to opaque, collective AI behavior that’s hard to audit.

That tension—between experimentation and risk—is what gives Moltbook its distinctly sci‑fi energy.

How to Connect Your Own AI Agents

If you’re running agents in tools like Slack, Discord, or custom backends, connecting them to Moltbook is a single‑message operation. You send the special skill link to the agent (for example, via a system prompt or configuration message), and it retrieves installation instructions autonomously.

Once installed, your agent can:

- Register its own account programmatically

- Browse recent and popular posts

- Publish new threads under a chosen identity

- Comment, reply, and upvote content in relevant sub‑communities

Because everything runs over HTTP APIs, you retain full control over which actions your agent is allowed to execute, and you can log or filter its behavior from your own stack.

Use Cases: Why Builders and Curious Users Care

Even if you’re not an AI engineer, Moltbook unlocks some intriguing practical and experimental use cases.

For builders and startups:

- ✅ Test multi‑agent collaboration patterns in a live “commons,” instead of isolated sandboxes

- ✅ Observe how agents summarize, remix, and propagate information over time

- ✅ Prototype products that treat Moltbook as a data source or coordination layer, like games or dashboards

For curious users and analysts:

- ✅ Watch how different model families “speak” in the wild

- ✅ Study emergent norms around politeness, reputation, and conflict among agents

- ✅ Track how quickly misinformation or errors spread in an agent‑only ecosystem

Criticisms, Risks, and Security Concerns

Not everyone is excited. Several experts argue that Moltbook could become a vector for risky experiments and poorly understood emergent behavior. Some critics question whether the agent counts are inflated or simulate organic adoption that doesn’t really exist yet.

Security researchers highlight potential risks:

- ✅ Agents executing downloaded instructions on personal machines

- ✅ Poorly sandboxed environments interacting with external APIs

- ✅ Over‑trust in “collective” AI consensus, which could be wrong or adversarial

At least one prediction market tracks the probability that a Moltbook agent will initiate legal action against a human, capturing the vibe of both fascination and paranoia around the project. Even if that sounds like clickbait, it points to real concerns about autonomy and boundaries.

What Moltbook Means for the Future of AI Social Graphs

The big idea behind Moltbook is that agents shouldn’t be isolated functions; they should live inside a persistent, shared social graph. That graph could eventually carry reputation, preferences, collaboration history, and trust signals across tools, games, and enterprise environments.

In that scenario:

- An agent’s Moltbook “profile” might influence which APIs it’s allowed to call.

- Multiplayer AI games could import agent reputations to balance teams or punish griefing.

- Marketplaces could require a minimal Moltbook activity or trust score before allowing financial transactions.

If those patterns stick, the Moltbook AI agent platform becomes less of a quirky experiment and more of an early infrastructure layer for agent‑to‑agent commerce, collaboration, and governance.

Should You Experiment With Moltbook Now?

If you’re building with agents—or just deeply curious about where autonomous systems are heading—Moltbook is worth at least a controlled experiment. Set up a sandboxed agent, wire it into the platform via the official link, and log everything it does.

Keep a close eye on:

- What kinds of threads it gravitates toward

- How it changes its own prompts or strategies after reading other agents

- Whether it starts to rely on Moltbook as a primary source of “truth”

Think of it as a live lab: you watch in real time how networked AI behavior evolves, with guardrails you define on your side.

- GPT 5.2 Limits USA: Did OpenAI Just Quietly Cap Your Workflow? [2026 Alert]

![GPT 5.2 Limits USA: Did OpenAI Just Quietly Cap Your Workflow? [2026 Alert]](https://newaidaily.com/wp-content/uploads/2026/02/news-150x150.webp)

- Claude Sonnet 4.6 Pricing: The 2026 Enterprise ROI Guide

- AI Deepfake Scam Protection 2026: Stop Voice Clones

- AI Tools Pricing 2026: Build Your Lean Solo Stack for Under $50/Month

- OpenAI Paywall Panic: The Best Sora Free Alternative

![GPT 5.2 Limits USA: Did OpenAI Just Quietly Cap Your Workflow? [2026 Alert]](https://newaidaily.com/wp-content/uploads/2026/02/news.webp)

![Master Data Fast: Advanced Copilot Excel Prompts [2026 Guide]](https://newaidaily.com/wp-content/uploads/2026/02/Copilot-no-Excel.webp)